USE CASE

Sifting existing literature for evidence relating to a research topic is a core element of clinical and life sciences research.

With DISQOVER's unique features, finding, assembling, and curating that evidence become a lot less time-consuming – enhancing productivity and giving you a leg up on your competition.

Taking inventory of all previously published knowledge on a specific topic is a daunting and time-consuming task for researchers. Evidence is often scattered across sources, and multiple searches across different platforms are often required to dig up all the necessary evidence.

This added complexity forces the researcher to perform careful manual curation and review to avoid duplicative results – costing organizations months of time and thousands of dollars per review. The manual curation and review process is time-consuming, expensive, and not scalable.

One way is by making sure that researchers use published literature for their research.

Publications are accepted versions of scientific work based on peer review processes. They are written in a standardized format so that other researchers can access them easily and find relevant pieces of information quickly and efficiently.

Scientists have been using publications as a source of knowledge for years; however, they were traditionally not considered scientific sources due to the fact that they did not undergo peer review processes or were not published in high-impact journals.

With the development of digital technologies, this idea has changed because publications can be found online and can be easily accessed.

In fact, the impact of publications is growing rapidly, as they are widely used by scientists to find relevant information for their research. It is now common to find scientific papers on social media or on websites that share scientific information.

Scan numerous publications simultaneously for literature and evidence relevant to your case.

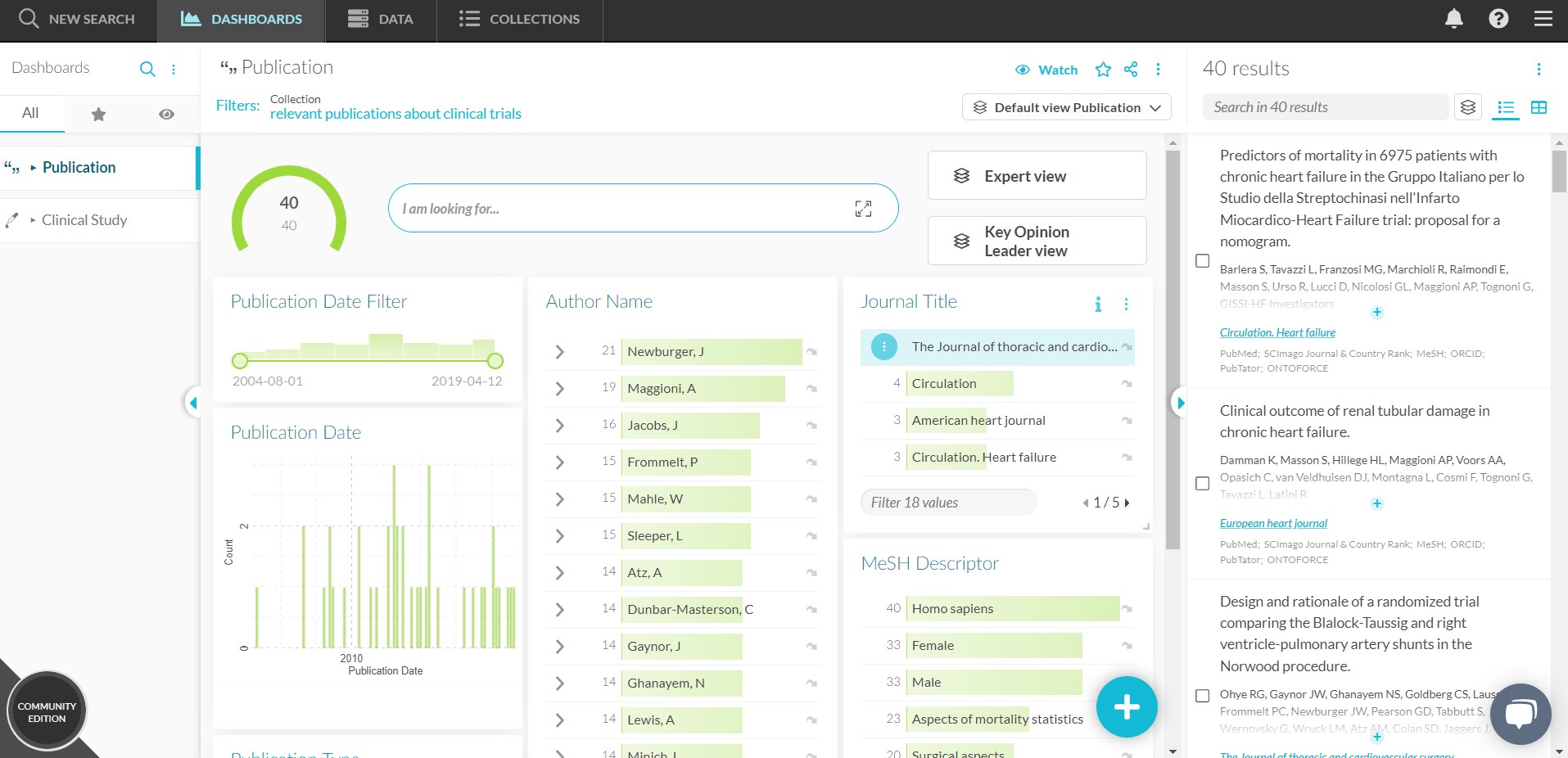

Rely on a consistent and intuitive user interface that harmonizes search results and makes information curation easier than ever.

Speed up the research process, boost productivity, and lower costs while accelerating time to value.

DISQOVER integrates numerous publication sources into one consistent and intuitive interface. Literature search results are harmonized into a common data model, significantly reducing the risk of duplicates and enhancing data curation. As a result, researchers can get the comprehensive results and the evidence they need faster, accelerating research.

DISQOVER is a knowledge platform that combines the power of DISQOVER’s natural language processing (NLP) technology with a modern and intuitive user interface. The platform enables researchers to quickly scan, search, browse, and navigate through all available evidence in one place. This unique approach allows them to find relevant information instantly, no matter the format, content, or source.

DISQOVER uses various patented technologies to support full-text search and analysis of unstructured content. DISQOVER’s Data Ingestion Engine has been integrated into the Knowledge Platform to enable it to read through previously published material and extract the key points in seconds. The engine can also be programmed with specific keywords or phrases to search for, which means it can pull up particular evidence on an issue relevant only to your organization’s interests. As a result, researchers can get the comprehensive results they need faster than ever before.

The Knowledge Platform also enables researchers to browse through previously published material by country or region, by topic area or keyword, or by publication source — which means you can reveal insights faster than ever before.

Experience a live walkthrough of DISQOVER with one of our experts, and get to see how our powerful knowledge discovery platform can help you accelerate your drug development activities.

© 2026 ONTOFORCE All right reserved