Have an impact on every part of the value chain

DISQOVER Technology

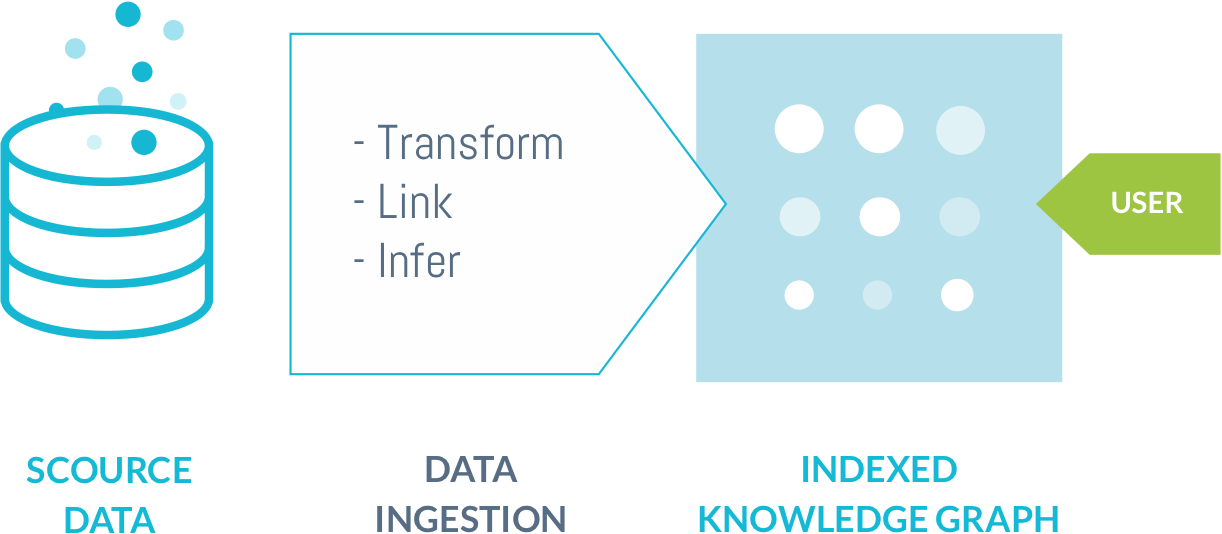

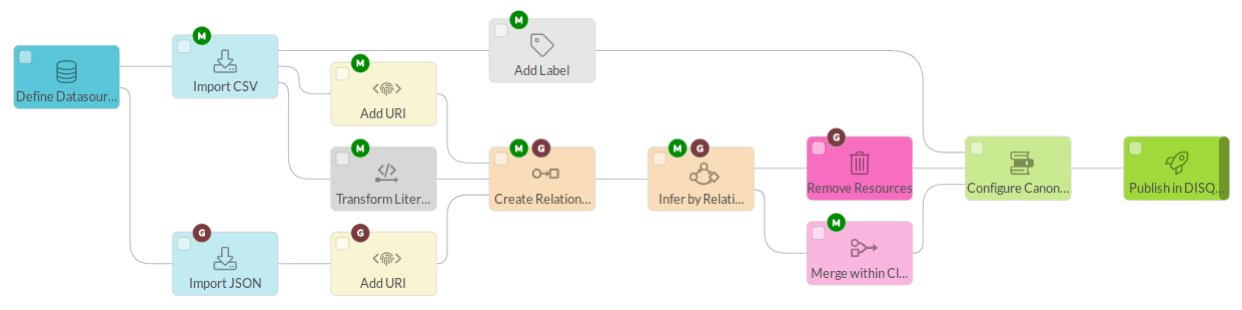

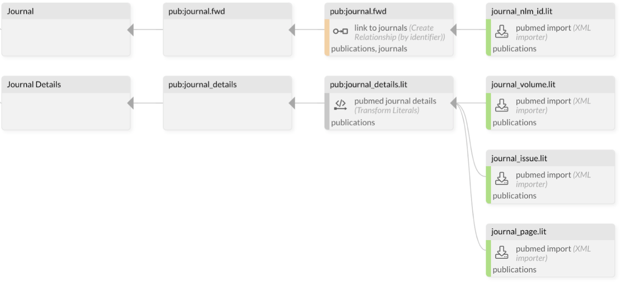

DISQOVER is equipped with a powerful and ultra-fast data ingestion engine. As a data scientist you can import, manipulate, link and integrate your own data into DISQOVER, using an innovative visual pipeline environment.

The data ingestion engine is capable of tracking data dependencies throughout the entire pipeline. Thanks to this, you can see what source data field(s) contributed to every information field in the DISQOVER database. Conversely, you can also see every source data field that DISQOVER is contributing to.

Our easy-to-use interface democratizes access to data through self-service knowledge discovery for both general and expert users.

Somewhere in that ocean of data is the missing puzzle piece you’re looking for. Connect siloed, disparate data and enable accurate and timely decisions.

As your trusted partner, we provide expert advice throughout your journey with us. Because fast implementations bring value fast.

We see our solution at the heart of your ecosystem. Openness and interoperability are essential to make data actionable for people and machines.

Collaborate with your dedicated and passionate ONTOFORCE data expert who understands your use case and speaks your language.

We gathered international recognitions and serve global organizations like AstraZeneca, BMS, UCB, Roche, and many others daily.

© 2024 ONTOFORCE All right reserved